The ability to identify a musical track from a brief and imperfect recording has long appeared to be an almost intuitive capability of modern systems. In practice, it is neither intuitive nor approximate. It is the result of a carefully engineered transformation of raw audio into a representation that is both computationally efficient and statistically robust. The system described in Avery Li-Chun Wang's 2003 work on audio fingerprinting provides a precise account of how this is achieved, demonstrating how signal processing, combinatorial feature design, and indexed retrieval converge to solve a problem that would otherwise be intractable at scale.

At the level of raw data, audio presents itself as a continuous waveform: a sequence of amplitude values sampled at high frequency. Two recordings of the same piece of music rarely resemble one another in this representation. Environmental noise, compression artifacts, variations in recording hardware, and differences in playback conditions introduce distortions that render direct waveform comparison unreliable. The central challenge, therefore, is not one of matching signals, but of identifying a representation in which equivalence can be detected despite these perturbations.

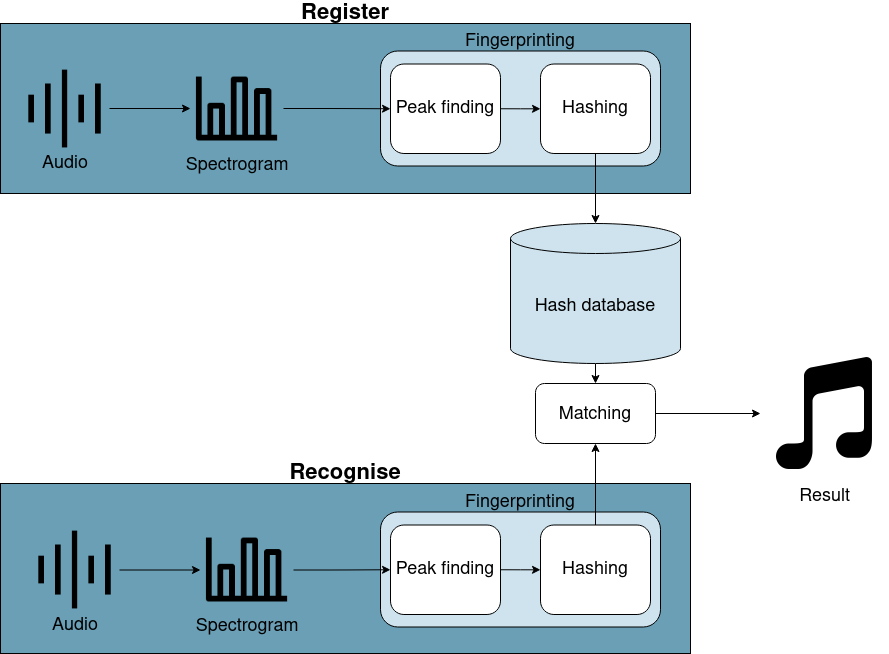

The solution begins with a transformation of the signal into the frequency domain. Any audio signal, regardless of its apparent complexity, can be expressed as a superposition of sinusoidal components. The Fourier Transform provides the mechanism for this decomposition, mapping the signal from a time-based representation into one defined by frequency and amplitude. However, because the spectral composition of music evolves over time, a single global transform is insufficient. Instead, the signal is segmented into short, overlapping windows, and the Fourier Transform is applied to each segment. This procedure, known as the Short-Time Fourier Transform, produces a spectrogram: a two-dimensional representation in which time and frequency form orthogonal axes, and amplitude is encoded as intensity.

The spectrogram constitutes a rich but highly redundant representation. While it captures the full temporal and spectral structure of the signal, it is too dense to be used directly for large-scale matching. The key insight in Wang’s formulation is that most of this information is unnecessary for identification. Instead, one can retain only those components of the signal that are both prominent and stable. These are the local maxima in the spectrogram, corresponding to frequency-time points where the energy is higher than in the surrounding neighborhood. Such peaks represent dominant tonal structures and tend to persist even under substantial noise and distortion.

By extracting only these peaks, the system reduces the spectrogram to a sparse constellation of points in the time–frequency plane. This representation is significantly more compact and, more importantly, exhibits invariance properties that are essential for robust matching. The omission of amplitude information further enhances stability, as it removes sensitivity to recording volume and equalization effects.

However, individual peaks carry limited discriminative power. A single frequency at a given time is insufficient to uniquely characterize a piece of music. To address this, the system constructs higher-order features by establishing relationships between peaks. Each peak is treated as an anchor point and is paired with other peaks within a defined temporal neighborhood. For each such pair, a tuple is formed consisting of the anchor frequency, the target frequency, and the temporal offset between them. This tuple is encoded into a compact hash, typically within a fixed-width integer representation (The anchor's absolute timestamp is stored alongside the hash and is used during the matching phase to confirm that candidate matches align consistently in time.)

This combinatorial pairing process is central to the system’s effectiveness. While a single peak encodes only a small amount of information, the relationship between two peaks captures a fragment of the structural pattern of the audio. By generating multiple such relationships for each anchor, the system increases the entropy of the representation, producing fingerprints that are both distinctive and reproducible. Even in the presence of noise, compression, or partial occlusion, many of these peak relationships remain intact, allowing the same hashes to be generated from both the original track and a degraded recording.

The collection of these hashes forms the basis of the indexing strategy. During the ingestion phase, hashes derived from each track are stored in a large lookup structure, where each hash key is associated with one or more occurrences, identified by track identifiers and temporal offsets. During query processing, the same hashing procedure is applied to the input snippet. Each resulting hash is used to query the index, retrieving candidate matches from the database.

The identification process is not based on isolated matches, but on the aggregation of consistent evidence. When a particular track is present in the database, multiple hashes from the query will correspond to hashes from that track, and the associated time offsets will exhibit a consistent alignment. By detecting clusters of matches with similar offset differences, the system can reliably identify the correct track, even when the query contains noise or only a partial segment of the original audio.

A defining characteristic of this approach is its computational efficiency. Each hash lookup is performed in constant time, independent of the size of the database. This is achieved through the use of hash-based indexing, which allows direct access to candidate matches without the need for sequential search. As a result, the time required to process a query depends primarily on the number of hashes generated from the snippet, rather than on the total number of tracks stored in the system. This property enables the system to scale to millions of tracks while maintaining near-instantaneous response times.

At the system level, additional considerations ensure that this theoretical efficiency is realized in practice. Data is partitioned across multiple storage units, frequently accessed segments are maintained in memory to minimize latency, and query processing is distributed to handle high volumes of concurrent requests. These architectural choices complement the underlying algorithm, ensuring that performance remains stable under real-world conditions.

Beyond its immediate application, the Shazam system illustrates a broader principle that is central to data science and computational design. The difficulty of a problem is often determined not by the volume of data involved, but by the form in which that data is represented. By transforming raw audio into a sparse, relational structure of spectral features, the system converts a complex pattern-matching task into a sequence of simple lookup operations. The effectiveness of the solution lies not in exhaustive computation, but in the selection of a representation that renders the problem tractable.

In this sense, the system exemplifies the role of abstraction in large-scale data processing. It demonstrates how careful feature design, grounded in domain knowledge and implemented through efficient data structures, can yield solutions that are both elegant and scalable. What appears, at the level of user experience, as instantaneous recognition is in fact the result of a sequence of precise transformations that reconcile robustness with efficiency.